Near field radar for representing surface features

Table of Contents

- Introduction + Objective

- Devices used

- Camera

- Raspberry Pi

- Radar devices considered

- Signal Processing

- Results

- Links

- The project group

Project goals

Our original objective was to demonstrate the possibility of using a imaging radar to produce visualization of surrounding enviroment and then combining that with a live video from a camera source. The idea is to provide the driver of a vehicle with better understanding of their surroundings than with just using a camera. Cameras lack in depth perception and suffer in adverse conditions such as darkness, rain and fog. Using a radar in conjunction with a camera could alleviate these weaknesses.

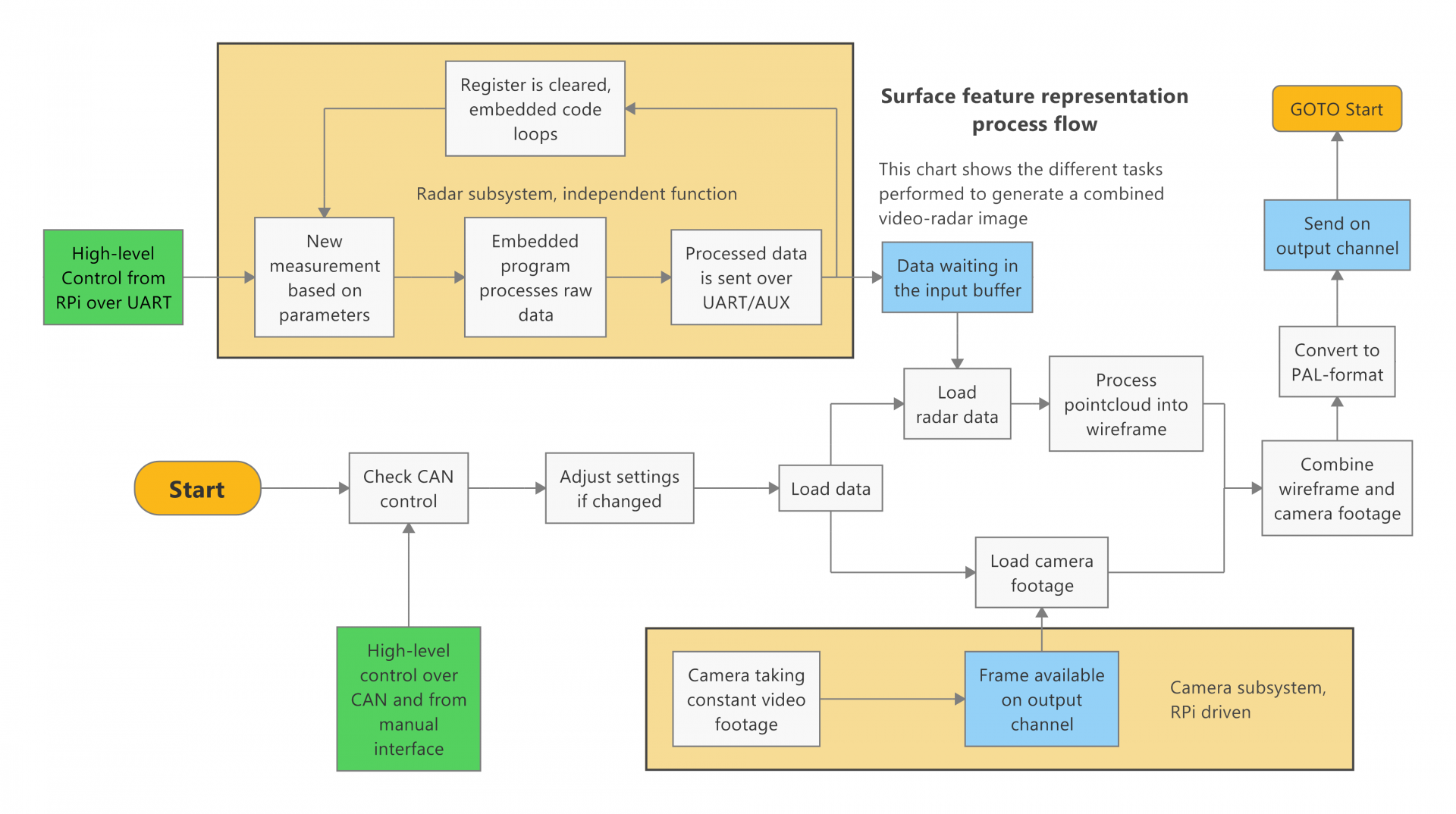

There were consideration given to interoperability with other systems. The combined footage should be possible to output in PAL video format and the device should be possible to control via CAN bus.

During the project it was proven to not be possible for us to acquire sufficient sensor with our limited budget and time to satisfy these goals. Instead of calling it quits we decided to attempt to do something with the limited hardware we were able to get, even if it was not exactly what we had originally attempted. Unfortunately at that point we did not have enough time to finish this new endeavor.

Devices used

Below is a brief description of the project components that would have made up the device.

Camera

For the camera, we obtained a Raspberry Pi Camera Module v2 from Sähköpaja. This was initially for prototyping only, but as other difficulties mounted we kept using this one.

The camera works very smoothly with Raspberry Pi and can output digital video footage at good quality.

Raspberry Pi

The Raspberry Pi 4 used has 4 Gigabytes of LPDDR4 RAM and Quad core 64-bit ARM-Cortex A72 processor. Using a 32 gigabit SD memory card allowed us to boot it with Raspbian OS. The Raspberry Pi 4 has an Ethernet port that was used to communicate with the radar device together with a micro-USB serial interface. The project code was done in Python 3.8.

Radar Devices Considered

Due to the ongoing pandemic wreaking havoc on the market, we had difficulties obtaining a suitable radar device. Here are some devices we looked into:

- MMWCAS-RF-EVM from Texas Instruments

- This cascading configuration radar evaluation module combines several radar chips into one coherent unit. It would have had sufficient specifiacitions for imaging purposes, but the device price starts at 1200€.

- Multiple high-end radar units unavailable for student prototyping

- Examples include General Radar Mark IV and RadSee-radar

- AWR1843BOOST from Texas Instruments

- This single-chip radar evaluation module would have been capable of 3D measurements and maybe even imaging, but was not in stock at any retailer.

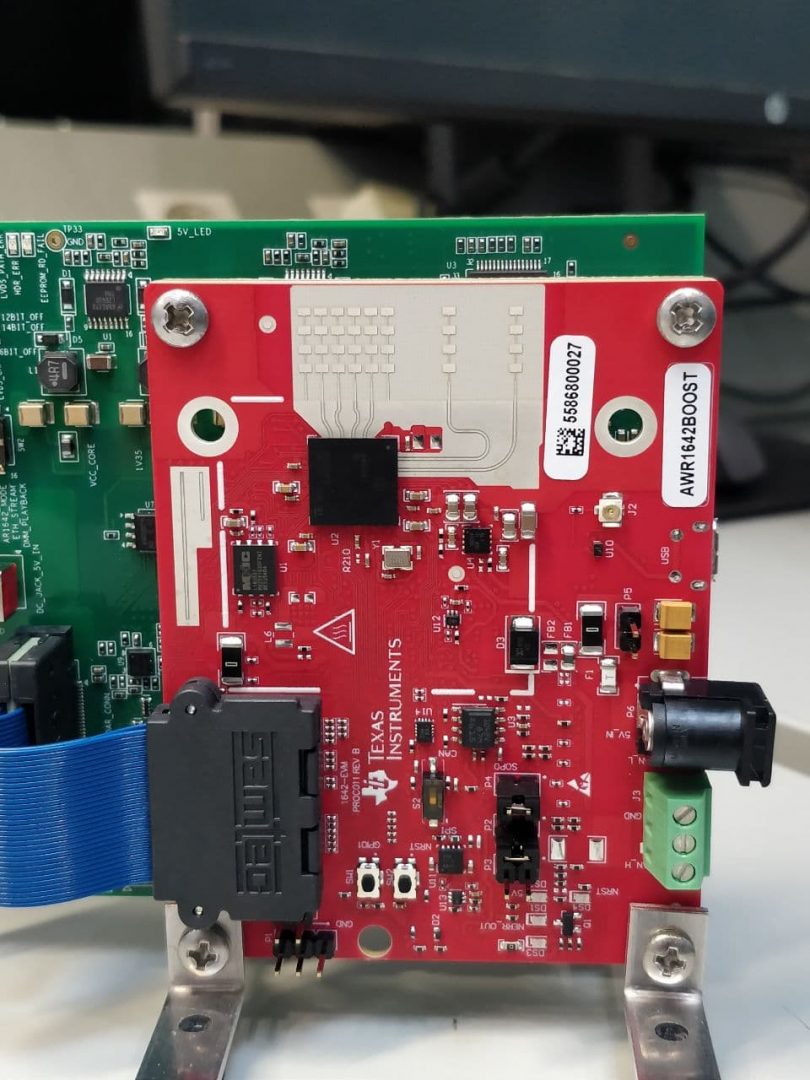

- AWR1642BOOST from Texas Instruments

- Another single-chip radar EVM, but this one was in stock! The reason for this is its lower quality and capabilities.

In the end we had to go with the sub-optimal AWR1642BOOST. The device is functional and we managed to install an embedded program into the device, but the connection to a computer or Raspberry Pi and reading the data turned out to be too time-consuming.

Signal processing

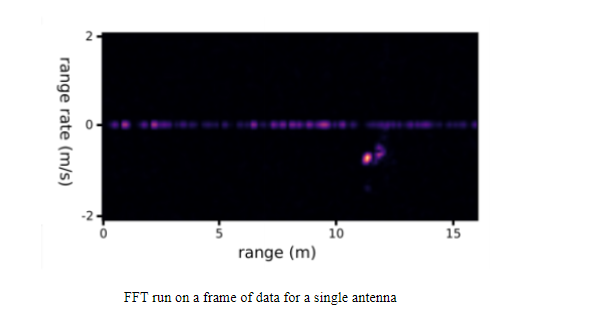

The radar antennas emit pulses with linearly increasing frequency referred to as “chirps”. The pulses are reflected by the environment and the reflections are detected by the receive antennas. Then the signals detected by each receive antenna are down-converted by combining them with the transmitted signals. Then the signals are sampled over a single chirp. These sampled signals are then stacked into 2d arrays referred to as “frames” and FFTs are performed across the arrays resulting in a heatmap showing the range and velocity of reflected signals.

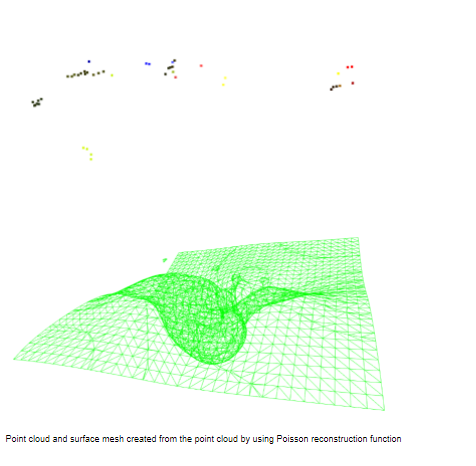

The horizontal and vertical angles of the reflections can be calculated by comparing the phase difference between the signals of receive antennas. When the range and the vertical and horizontal angles of reflections are known the data can be presented as point clouds(a list of xyz coordinates). Point clouds can be further processed for example with Poisson reconstruction to create surface meshes.

Results

The above diagram presents all the necessary subprocesses to get the desired results. In the end, we never could get the “Load radar data” block to work one way or the other, breaking the process flow and denying us a chance to even test an integrated system.

Links:

GitHub: https://github.com/JaakkoHeii/Nearfield-radar-software

License:

Code: the MIT license

Other works: Creative Commons (CC BY 4.0)

The project group

Lauri Pallonen Patrick Linnanen Jaakko Heikkinen