Last Mile Autonomous Delivery

Objective

- The robot should be able to segment the path that it is supposed to follow. That segmentation should be clear and have limited noise. In addition, the segmentation of other objects of interest should also be of adequate quality. The model should work under different conditions in terms of location, weather, path curvature, .etc.

- With regard to obstacle detection, the system should be able to detect obstacles with different sizes. The localization of detected actors should be accurate (colored or with a bounding box drawn over them) and is accompanied by rich information.

- Finally, path planning should behave reliably, showing a result that would be in agreement with what a human would come up with. It also has to be realistic, avoiding sharp turns that the robot could not complete with reasonable speed.

- As a whole, the system should work with consistency at a sufficient speed, allowing the robot to make timely maneuvers under complex situations.

Path segmentation

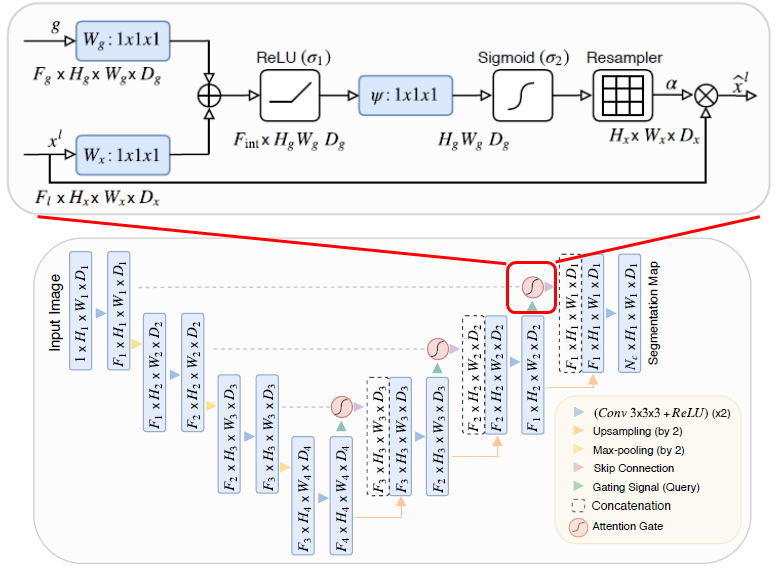

In order for the robot to sense its surroundings, it needs to understand the environment around it. After that, there should be some mechanisms to isolate different subjects in the scene, so that the robot can pay more attention to meaningful signals and remove uninteresting information. In this project, we used UNet with attention to perform semantic segmentation and drivable area segmentation to help the robot gain understanding of its surrounding environment.

Figure 1: UNet with attention mechanism.

Figure 1: UNet with attention mechanism.

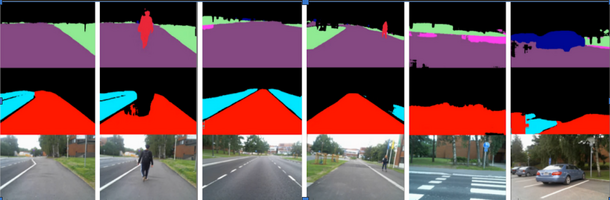

We used the CityScapes dataset to train the semantic segmentation model, and Berkeley Deep Drive to train the drivable area segmentation model. The result of the two models was combined for final output segmentation.

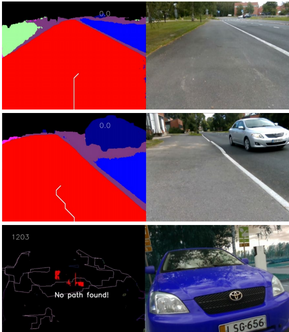

Figure 2: Results from the two models of path segmentation. The top row shows the segmentation of different objects including road, sidewalk, terrain, car, and person. The second row shows the segmentation of different drivable lanes of the road (this ensures the separation of the main road and the sidewalk). The last row shows the images from the camera.

Figure 2: Results from the two models of path segmentation. The top row shows the segmentation of different objects including road, sidewalk, terrain, car, and person. The second row shows the segmentation of different drivable lanes of the road (this ensures the separation of the main road and the sidewalk). The last row shows the images from the camera.

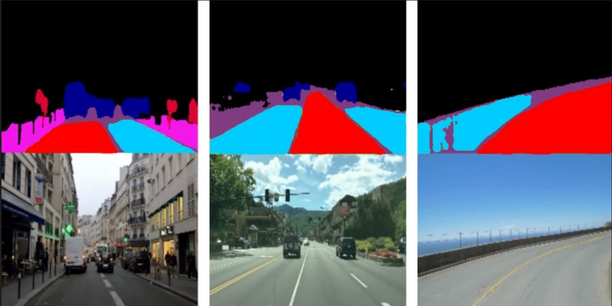

Figure 3: The combination of the outputs of both of the models.

Figure 3: The combination of the outputs of both of the models.

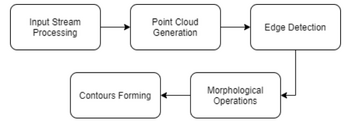

Obstacle Detection

Obstacle detection is one of the most important components of an autonomous vehicle. It prevents the robot from damaging itself and other people around it while in operation, ensuring smooth deployment. Hence, the domain has been a very actively researched area within computer vision, with many breakthroughs happening in recent years. For our project, we opt for a simple implementation of the system which comprises only one class of obstacle, regardless of it being static or dynamic. The sequence of steps implemented is as follow:

Figure 4: Overview of our object detection pipeline.

Figure 4: Overview of our object detection pipeline.

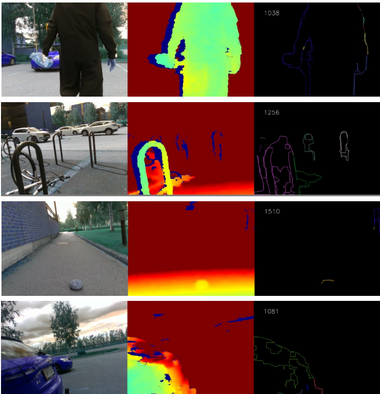

Figure 5: Results at each level of the obstacle detection process.

Figure 5: Results at each level of the obstacle detection process.

Path Planning

In this module, we identify a path through which the robot can travel without colliding into any detected obstacles or straying from the segmented path. There are many approaches to this Page 9 of 19 problems including graph traversal algorithms, sampling-based methods, or using the artificial potential field. The technique that we specifically chose was the Weighted A-star path search algorithm, a variant of the famous A-star, because of its simplicity and efficiency.

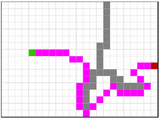

Figure 6: Path planning illustration.

Figure 6: Path planning illustration.

Figure 7: Path planning in different scenarios.

Figure 7: Path planning in different scenarios.

Right: RGB images. Left: Output of path planning.

Testing robot

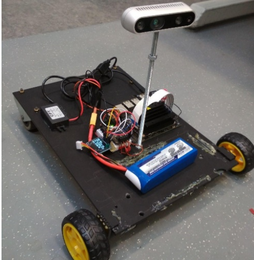

Hardware used for the second prototype:

– Jetson Xavier

– Acrylic sheet as frame

– 11,1V LiPo battery as power supply

– 12v to 5v converter

– 2 x 11V motors + wheels

– Feather wing 5-12V motor driver

– PiOLED display

– Intel Realsense Depth Camera

– Xbox controller

Figure 8: Prototype of the test robot.

Figure 8: Prototype of the test robot.

Conclusion

- In this project, we presented a vision-based system that can understand its surrounding environment. Path segmentation deep learning model helps the robot know where it can go; path planning shows where it should go; obstacle detection tells it where to avoid. We showed that our system is real-time capable of a computationally constrained platform such as the Jetson Xavier NX.

- Autonomous robots, especially vehicles, are exciting innovations. They opened the way for a wide range of applications that tremendously benefit people. By transitioning to autonomous systems, the innumerable number of business processes would be improved, countless lives would be saved. Realizing the tremendous opportunity of autonomous systems, we expect this project could be served as a proof of concept for the feasibility of adopting such technology to improve lives in Finland.

- Working on this project gives us the opportunity to apply our knowledge in practice and push our skillset to a new level, but also prepare us with the empathy and discipline to work in an international team. We got to know the current advancement in computer vision, AI, and robotic hardware; we learned about their strengths and weaknesses when applied to practical settings.

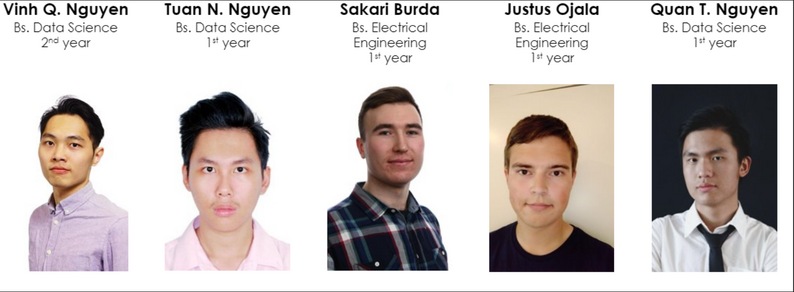

Our Team Member

Links

Github – Final Report – Presentation

License

Code: MIT-License

Other material: Creative Commons (CC BY 4.0)