Menu

Nutshell

Car chassis

3D modeling and printing

Intel RealSense T265

Jetson Nano

Donkeycar

Computer vision

Video

Nuthsell

What we did? For whom? Why?

Our project was done in cooperation with Futurice. They tasked us with creating a self-driving race car the size of a normal radio control (RC) car and beating a rival team’s car “Markku AI”. The car itself is based on an existing self-driving car system called DonkeyCar, which has been adapted to run on the Jetson Nano, a single-board computer made by Nvidia for AI purposes. In addition, the project called for custom 3D printed parts.

Car chassis

The chassis used in the project was Tamiya TT-02, which we got as a DIY kit to save on expenses. It’s a regular 1/10 scale RC car chassis and had quite a good amount of room for the devices we needed to install in order to have a self-driving car.

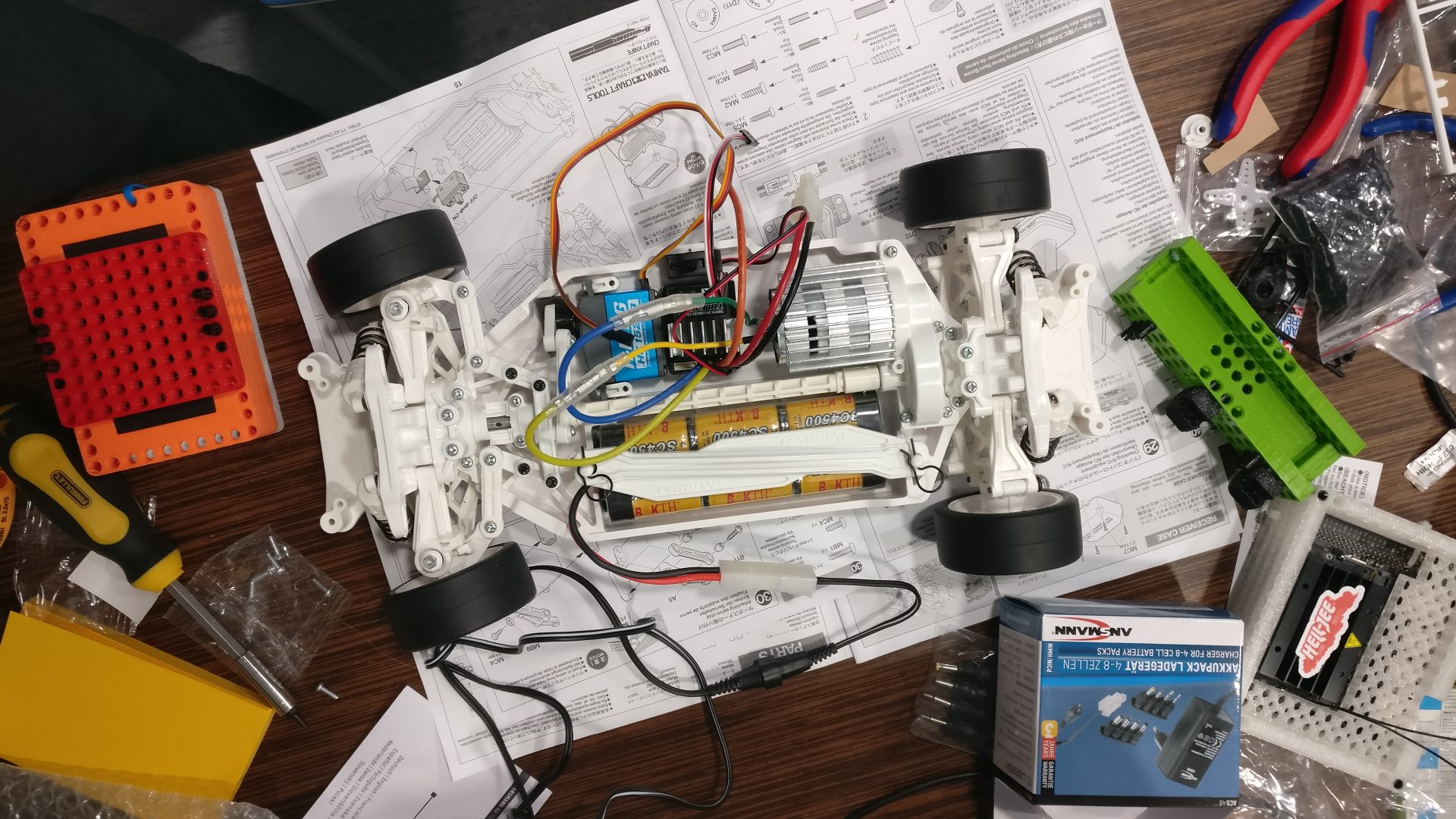

Assembled car chassis with the parts included in the kit:

Chassis with the electronics essential to a regular RC car (car battery, electronic speed controller, steering servo), excluding a receiver for a controller, since we are using Jetson Nano to control the car instead:

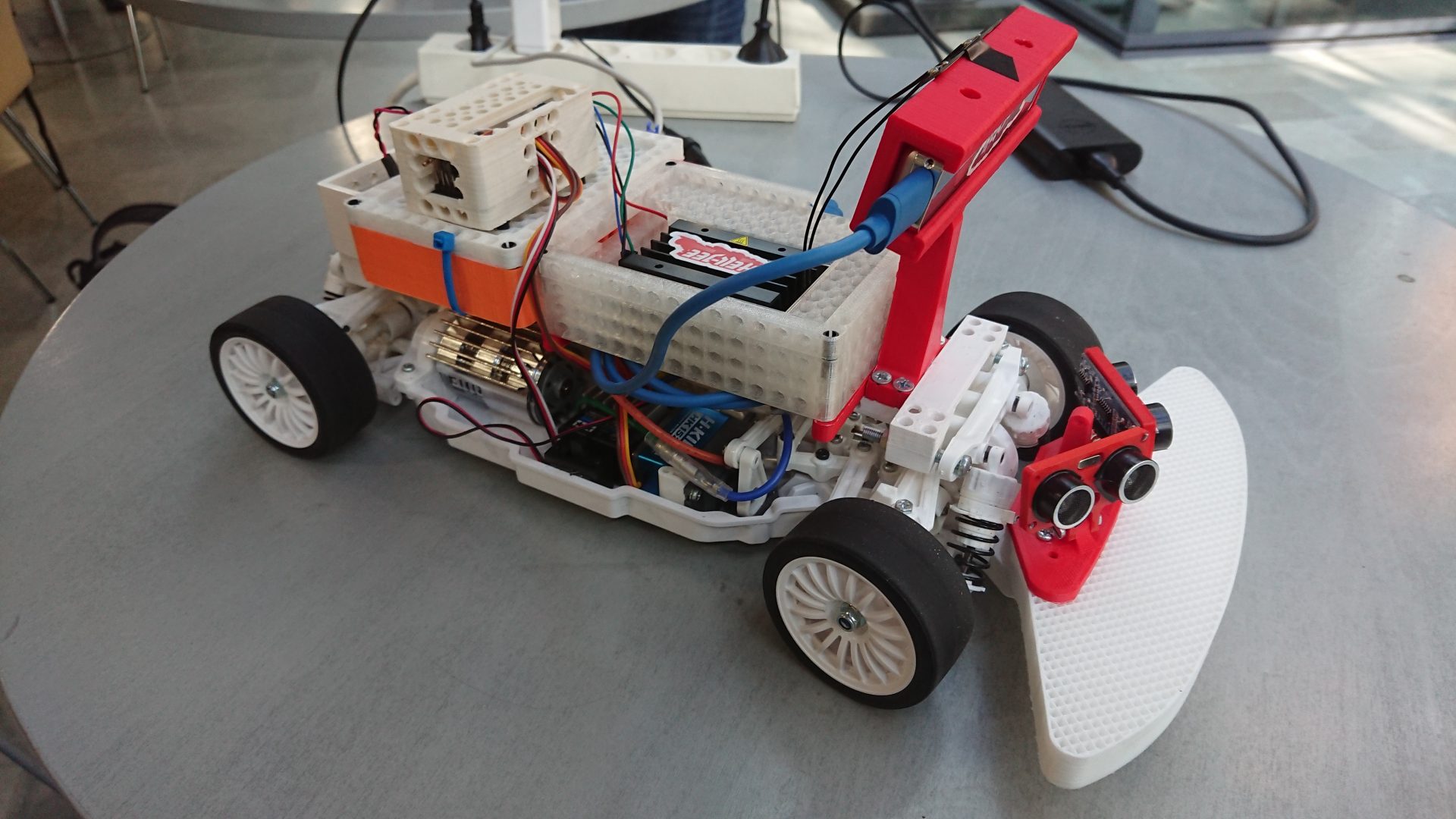

The finished product with Jetson Nano, Intel RealSense T265, Sunfounder PCA9685, a buck converter, am USB power bank and all of the 3D printed parts:

3D modeling and printing

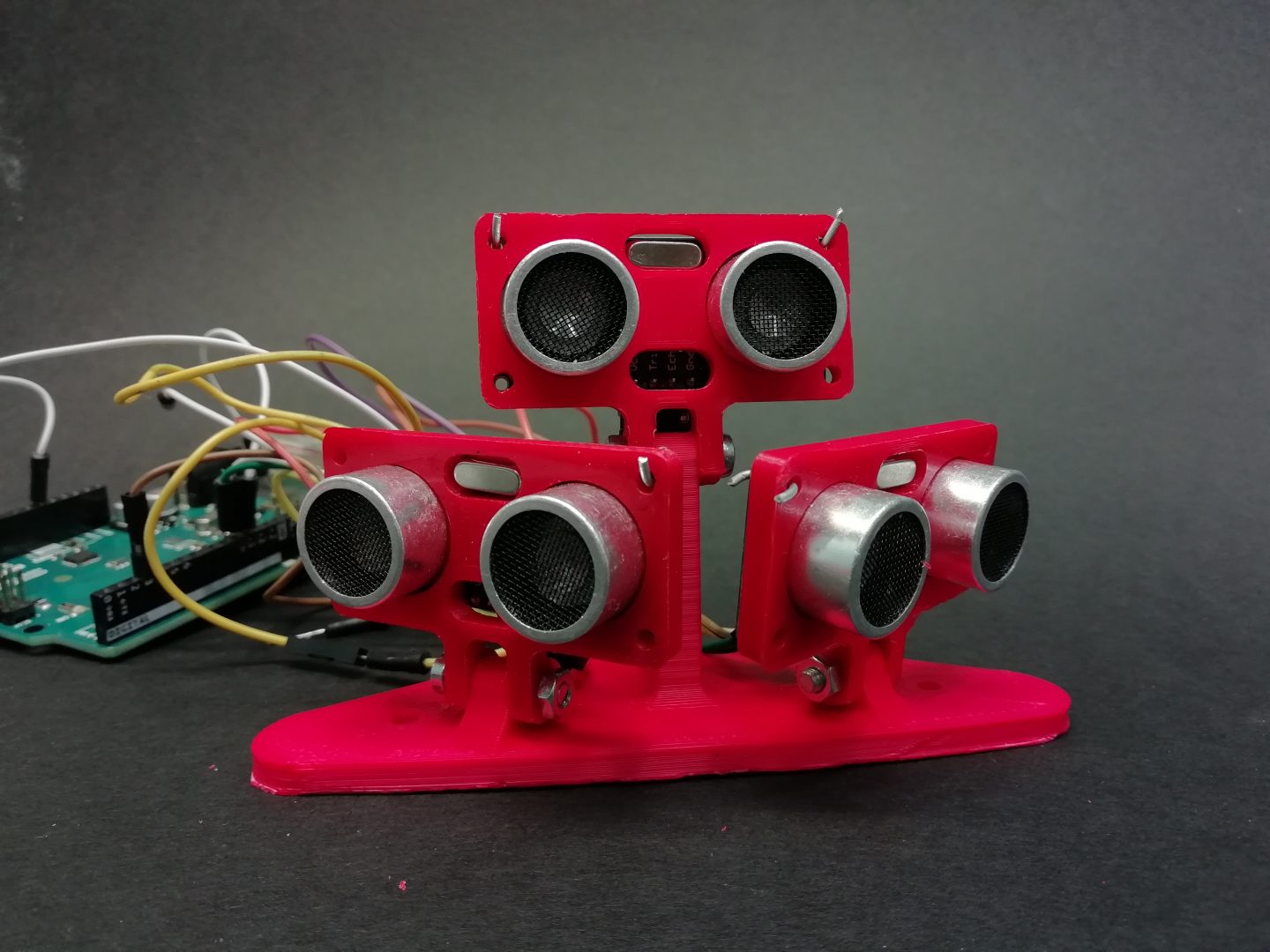

The original Tamiya TT-02 bumper was too narrow to offer sufficient protection to the front wheels, and an impact to the wheels could potentially damage the steering technology. Therefore, we needed to develop a broader bumper with better shock absorption qualities. The project also called for a mount for three ultrasonic sensors and a stand for Intel RealSense T265 camera. Both needed an adjustable vertical angle for optimization purposes.

Below are 3D models of the custom parts we designed for this project. You can turn and zoom the objects with your mouse. If the models don’t upload correctly, please visit this git repository and view them there.

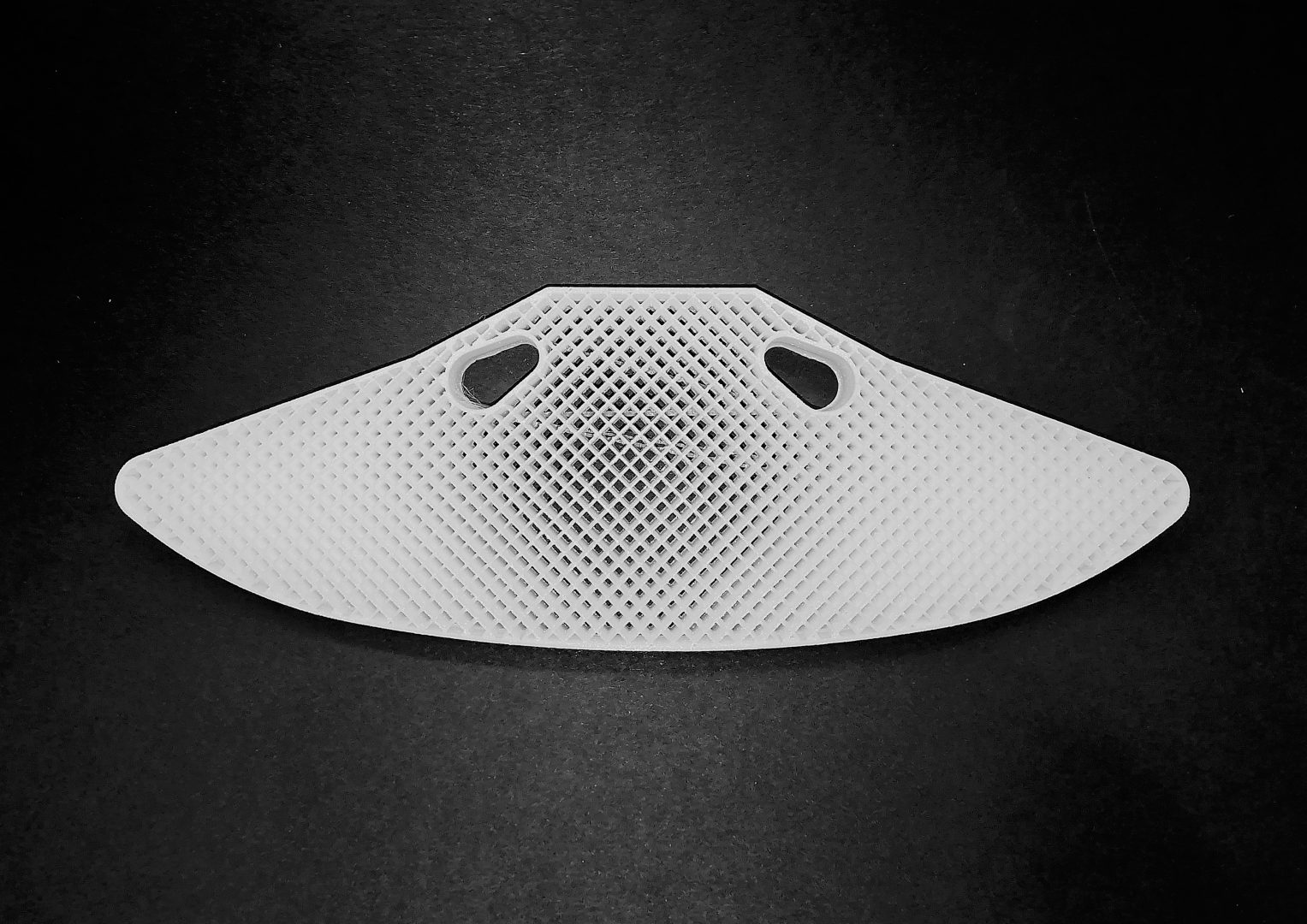

3D model view of the bumper (click for thingiverse page).

After several experiments and prototypes, we chose thermoplastic polyurethane (TPU) 95A as bumper material. Print settings are important for optimal results: we used grid infill pattern with 35 % infill density and infill wall count was set to two. The bottom and top layers were set to zero, making the design hollow and thus giving it more flexibility and better shock absorption qualities.

3D model view of the camera stand for Intel RealSense T265 (click for thingiverse page).

3D view of the ultrasonic sensor mount (click for thingiverse page).

In addition to our own designs we used PELA blocks, which are LEGO-compatible 3D printed blocks and gadgets. PELA blocks are designed to be easily customized so they are great for prototyping. List of the PELA blocks that we used in this project:

- PELA Technic Beam

- PELA Drift Car Center Beam

- PELA Arduino Uno Technic Mount

- PELA Jetson Nano Technic Mount

- PELA Powerbank Technic Mount.

Image gallery of 3D prints:

Intel RealSense T265

Intel RealSense T265 camera is a compact, low power camera capable of simultaneous localization and mapping (SLAM). Our Futurice contacts were able to borrow us one, and we were excited to test its possibilities for our use case.

The camera has dual black and white fisheye lenses at the front, and it can measure all 6 degrees of freedom. Based on the camera and other sensor feed, it can make quite good guesses where it is positioned compared to the starting position. It does all of this with its built-in custom SoC. USB 3 also allows a live image feed processing.

Intel provides a development kit called ‘Intel RealSense SDK’ done with C and C++ for free. We were especially interested in the Python wrapper to the newest version called pyrealsense2.

The library provides a live feed for sensor values and camera frames. Some of the features are not well documented, but at least some working examples can be found from the source page.

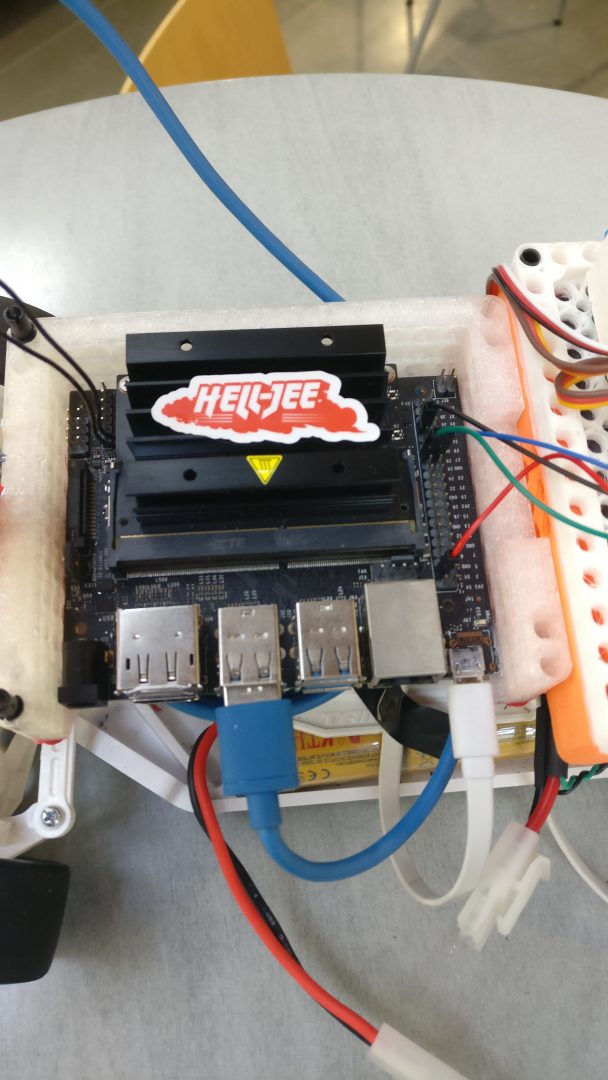

Jetson Nano

For our driving computer, we used Jetson Nano, which is a single board computer similar to Raspberry Pi. It is designed for AI applications, so it was a good fit for our autonomous car.

It has:

- Quad-core ARM A57 64-bit CPU

- Integrated 128-core Maxwell GPU

- 4GB LPDDR4 RAM

Jetson can be powered through either Micro-USB with 5V⎓2A or a DC barrel jack with 5V⎓4A and it can be run in either 10W or 5W mode. To use the 10W mode, we recommend aiming for 4A, and for 5W mode 2A-2.5A should suffice.

Donkeycar

Donkeycar is an open source project, which includes all essential code for creating an autonomous RC car. At the time of writing this, it supports Raspberry Pi and Jetson Nano, and can be used on Windows, Mac or Ubuntu. It also has support for various cameras, including PiCam and USB cameras. However, the camera we decided to use, Intel RealSense T265, didn’t work out of the box with donkeycar, so we had to add some code to make them compatible with each other. The files we changed on the project can be found here.

Computer vision

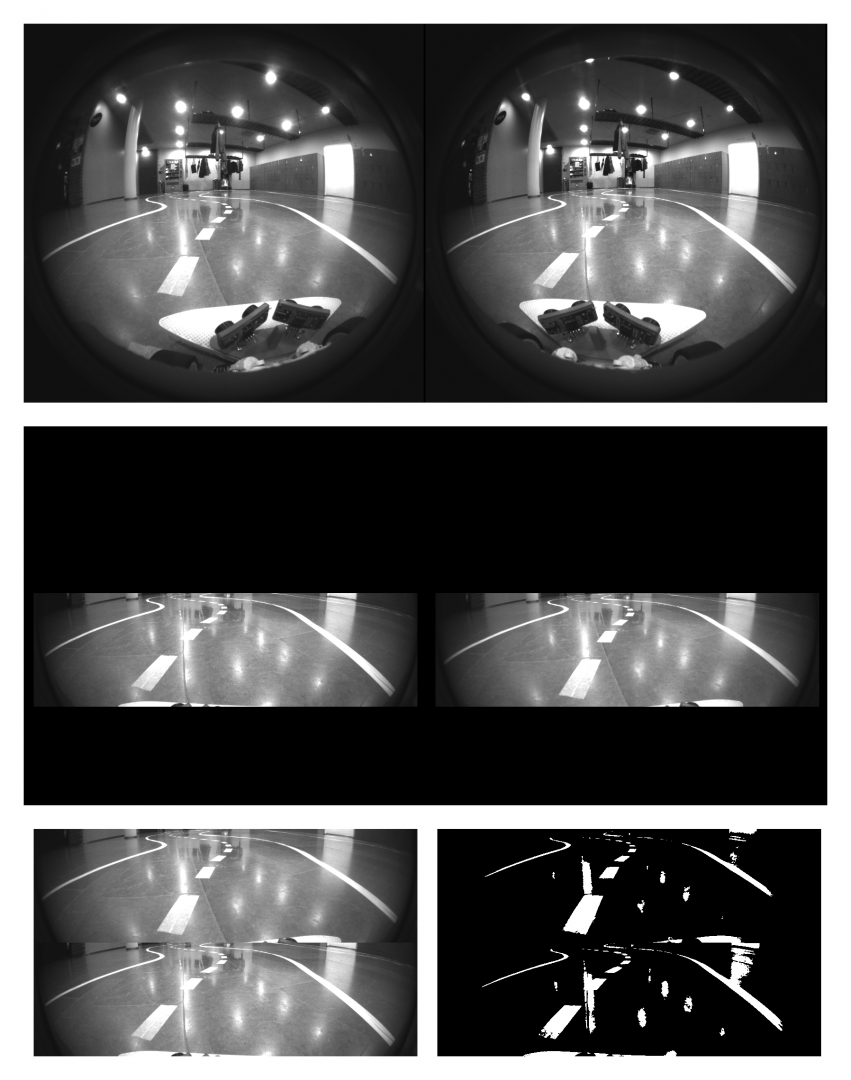

It was known that the final demo would take place on a new track with much darker ground base color, not the one where we would do our testing. That is why we decided to do pre-processing to reduce the impact that the different ground color would have in our image. All of the pre-processing steps were done with python wrapper of the OpenCV library.

The image above demonstrates the steps that we made to the image frames before feeding it to the machine learning model. Undistorting the fisheye effect was not done, because the results were good without doing so.

The top shows the unmodified images both fisheye cameras concatenated together. After that, only the parts containing the actual track were cropped, and combined together, as demonstrated in image at the bottom left.

The last step was to use thresholding to change all of the pixels either black or white. This was done because we wanted to generalize our results so it would work hopefully on slightly different tracks also.

You can view the image processing code in github.

Video demonstration

This work is licensed under a Creative Commons Attribution 4.0 International License.